I previously blogged about ensuring that the “ON CONFLICT” directive is used in order to avoid vacuum from having to do additional work. I also later demonstrated the characteristics of how the use of the MERGE statement will accomplish the same thing.

You can read the original blogs here Reduce Vacuum by Using “ON CONFLICT” Directive and here Follow-Up: Reduce Vacuum by Using “ON CONFLICT” Directive

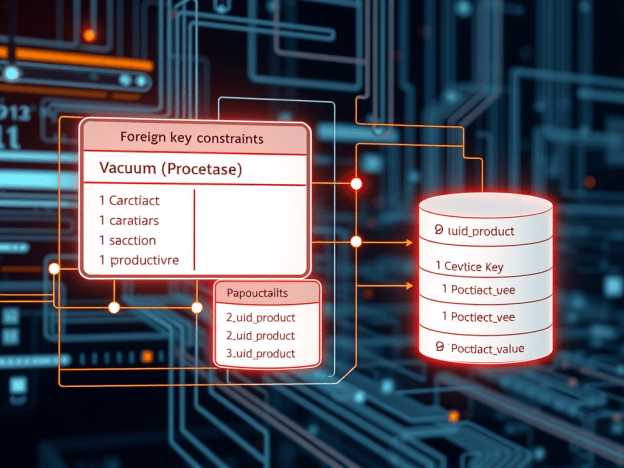

Now in another recent customer case, I was chasing down why the application was invoking 10s of thousands of Foreign Key and Constraint violations per day and I began to wonder, if these kinds of errors also caused additional vacuum as described in those previous blogs. Sure enough it DEPENDS.

Let’s set up a quick test to demonstrate:

/* Create related tables: */

CREATE TABLE public.uuid_product_value (

id int PRIMARY KEY,

pkid text,

value numeric,

product_id int,

effective_date timestamp(3)

);

CREATE TABLE public.uuid_product (

product_id int PRIMARY KEY

);

ALTER TABLE uuid_product_value

ADD CONSTRAINT uuid_product_value_product_id_fk

FOREIGN KEY (product_id)

REFERENCES uuid_product (product_id) ON DELETE CASCADE;

/* Insert some mocked up data */

INSERT INTO public.uuid_product VALUES (

generate_series(0,200));

INSERT INTO public.uuid_product_value VALUES (

generate_series(0,10000),

gen_random_uuid()::text,

random()*1000,

ROUND(random()*100),

current_timestamp(3));

/* Vacuum Analyze Both tables */

VACUUM (VERBOSE, ANALYZE) uuid_product;

VACUUM (VERBOSE, ANALYZE) uuid_product_value;

/* Verify that there are no dead tuples: */

SELECT

schemaname,

relname,

n_live_tup,

n_dead_tup

FROM

pg_stat_all_tables

WHERE

relname in ('uuid_product_value', 'uuid_product');

schemaname | relname | n_live_tup | n_dead_tup

------------+--------------------+------------+------------

public | uuid_product_value | 10001 | 0

public | uuid_product | 201 | 0

Then, let’s issue a simple insert that will violate the FK and check to see if dead tuples were generated:

/* Insert a row that violates the FK, without the ON CONFLICT directive */

INSERT INTO public.uuid_product_value VALUES (

generate_series(10001,10001),

gen_random_uuid()::text,

random()*1000,

202, /* we know this product_id doesn't exist in the parent */

current_timestamp(3));

ERROR: insert or update on table "uuid_product_value" violates foreign key constraint "uuid_mod_test_product_id_fk"

DETAIL: Key (product_id)=(202) is not present in table "uuid_product".

Time: 3.065 ms

And now check the tuple stats:

SELECT

schemaname,

relname,

n_live_tup,

n_dead_tup

FROM

pg_stat_all_tables

WHERE

relname in ('uuid_product_value', 'uuid_product');

schemaname | relname | n_live_tup | n_dead_tup

------------+--------------------+------------+------------

public | uuid_product_value | 10001 | 1

public | uuid_product | 201 | 0

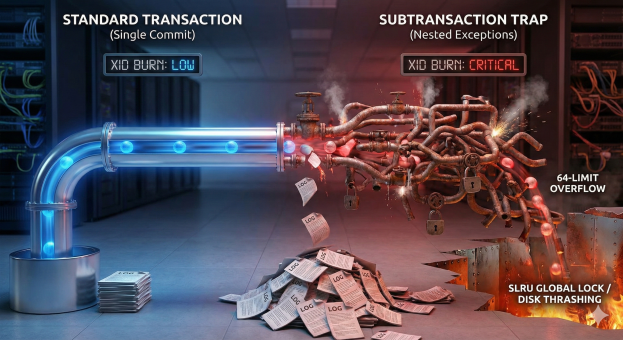

Sure enough, we now have a dead row as a result of the FK violation on the insert. But, will an “ON CONFLICT” directive help us in this scenario like in the others?

/* Insert a row that violates the FK, but with the ON CONFLICT directive */

INSERT INTO public.uuid_product_value VALUES (

generate_series(10001,10001),

gen_random_uuid()::text,

random()*1000,

202, /* we know this product_id doesn't exist in the parent */

current_timestamp(3)) ON CONFLICT DO NOTHING;

/* Verify the tuple stats: */

SELECT

schemaname,

relname,

n_live_tup,

n_dead_tup

FROM

pg_stat_all_tables

WHERE

relname in ('uuid_product_value', 'uuid_product');

schemaname | relname | n_live_tup | n_dead_tup

------------+--------------------+------------+------------

public | uuid_product_value | 10001 | 2

public | uuid_product | 201 | 0

Unfortunately, it does not solve this problem. So we need to really be cognizant of FK violations and its effect on vacuum. Now what about trying to insert a NULL into a NOT NULL column? Will that result in a dead row? Let’s check.

/* Alter a column to NOT NULL */

ALTER TABLE public.uuid_product_value

ALTER COLUMN pkid SET NOT NULL;

/* Check the table definition */

Table "public.uuid_product_value"

Column | Type | Collation | Nullable | Default

----------------+--------------------------------+-----------+----------+---------

id | integer | | not null |

pkid | text | | not null |

value | numeric | | |

product_id | integer | | |

effective_date | timestamp(3) without time zone | | |

Indexes:

"uuid_mod_test_pkey" PRIMARY KEY, btree (id)

"uuid_mod_test_product_id_idx" btree (product_id) WHERE id >= 1 AND id <= 1000

"uuid_mod_test_product_id_idx1" hash (product_id)

Foreign-key constraints:

"uuid_mod_test_product_id_fk" FOREIGN KEY (product_id) REFERENCES uuid_product(product_id) ON DELETE CASCADE

/* Insert a row that violates the NOT NULL constraint */

INSERT INTO public.uuid_product_value VALUES (

generate_series(10001,10001),

NULL,

random()*1000,

200,

current_timestamp(3));

ERROR: null value in column "pkid" of relation "uuid_product_value" violates not-null constraint

DETAIL: Failing row contains (10001, null, 613.162063338205, 200, 2026-03-13 14:25:28.758).

/* Verify the tuple stats: */

SELECT

schemaname,

relname,

n_live_tup,

n_dead_tup

FROM

pg_stat_all_tables

WHERE

relname in ('uuid_product_value', 'uuid_product');

schemaname | relname | n_live_tup | n_dead_tup

------------+--------------------+------------+------------

public | uuid_product_value | 10001 | 2

public | uuid_product | 201 | 0

As you can see, a violation of the NOT NULL constraint does not have the same behavior as a violation of the FK constraint. It’s always good to know and relay to the application development staff what operations are going to result in more work for the database and adjust the code accordingly. Enjoy!